This paper is available on arxiv under CC 4.0 license.

Authors:

(1) Kiriaki Frangias;

(2) Andrew Lin;

(3) Ellen Vitercik;

(4) Manolis Zampetakis.

Table of Links

Warm-up: Agents with known equal disutility

Agents with unknown disutilites

Conclusions and future directions, References

B Omitted proofs from Section 2

C Omitted proofs from Section 3

D Additional Information about Experiments

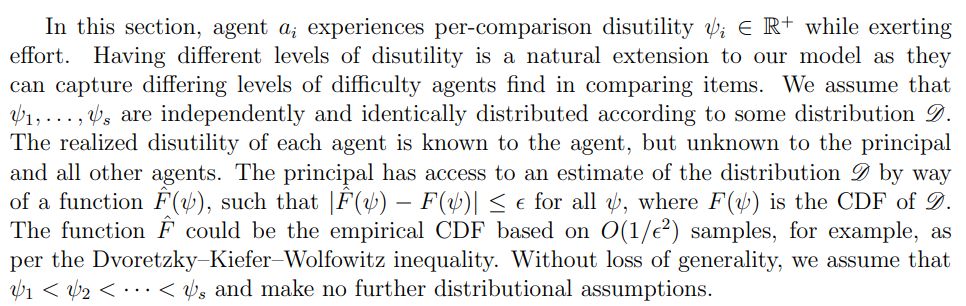

3 Agents with unknown disutilites

We now significantly expand our model to study heterogeneous agents with differing, unknown disutilities. The full proofs of all results in this section are in Appendix C.

3.1 Model and notation

Some agents might experience high disutility when exerting effort, so it may not be in the principal’s best interest to incentivize all agents to exert effort. Therefore, the principal must set the payment so that the number of agents incentivized is utility maximizing for the principal. For the rest of this section, we denote by g the number of agents that the principal aims to incentivize; in Theorem 3.6, we describe an efficient optimization problem that solves for the optimal value of g, denoted g∗. The principal controls the number of incentivized agents only through the payment function. Hence, the realized number of agents that exert effort, denoted ˆg, is a random variable with a distribution that depends on the payment. Under this model, the principal will:

1. Calculate g∗, the optimal number of agents to incentivize.

2. Announce the payment pg∗ ∈ R+ (based on g∗).

3. Verify a subset of comparisons assigned to the agents.

4. Assign each comparison to a total of r agents.

5. Pay pg∗ to all agents not identified as “bad.”

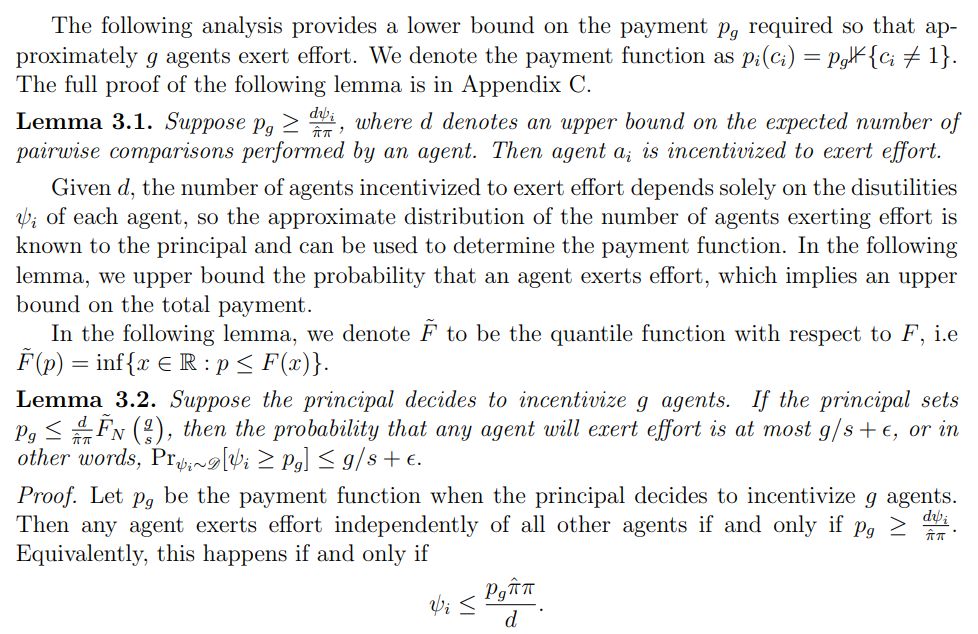

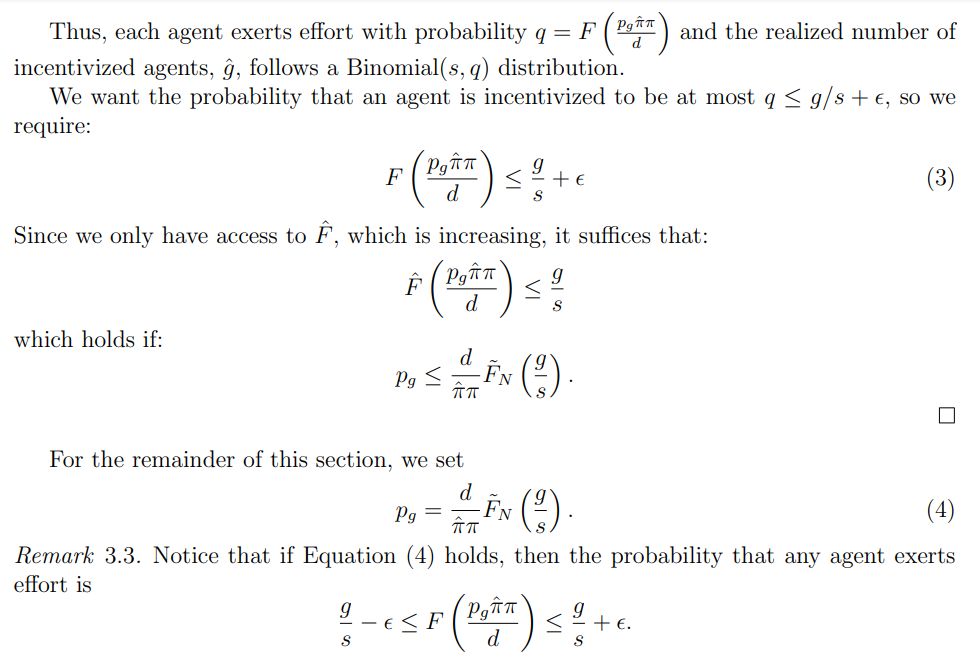

3.2 Payment function

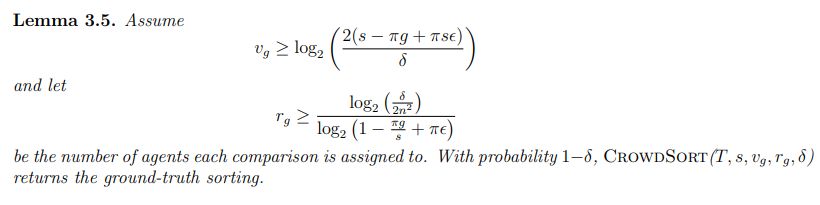

3.3 Correctness of CrowdSort

Next, we bound the redundancy required to ensure that CrowdSort returns the groundtruth ordering.

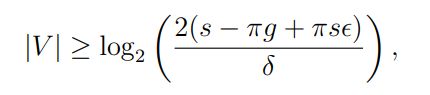

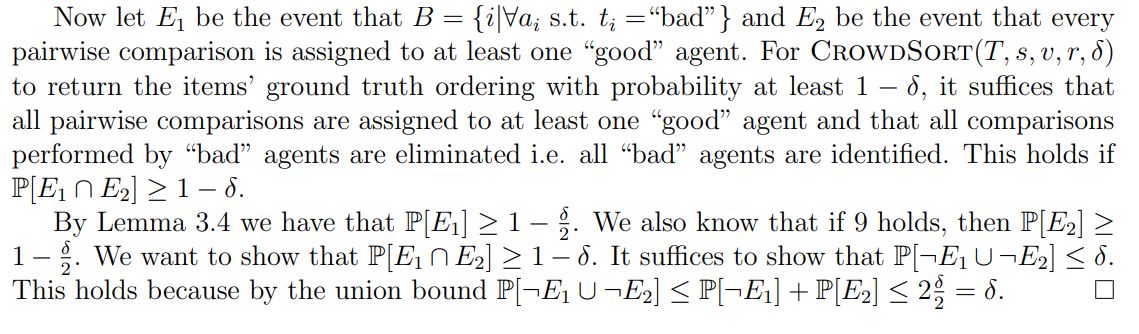

Proof. We know from Lemma 3.4 that if

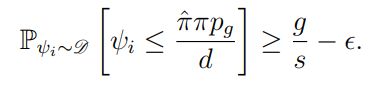

then with high probability, the algorithm CrowdSort(T, s, v, r, δ) identifies all “bad” agents. Therefore, it suffices to assign each comparison to at least one “good” agent for the true value of the comparison to be returned. By Remark 3.3, we know that for any agent ai,

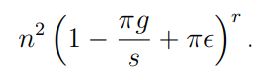

So the probability that all r agents assigned to a single comparison are “bad” is at most:

Taking the union bound over all comparisons, we have that the probability there exists a comparison such that all agents assigned to it are “bad” is at most

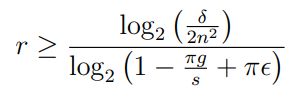

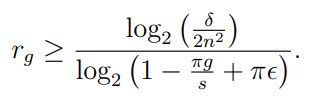

Upper-bounding the above probability by δ/2, we get that:

Finally, notice that the above inequality and Inequality (6) hold simultaneously with probability at least 1 − δ/2.

3.4 Principal’s incentive

We combine the results presented above in the following theorem, in which we additionally prove that it is possible for the principal to efficiently compute the optimal number of agents to incentivize. Based on Lemma 3.5, we use the notation

and

This paper is available on